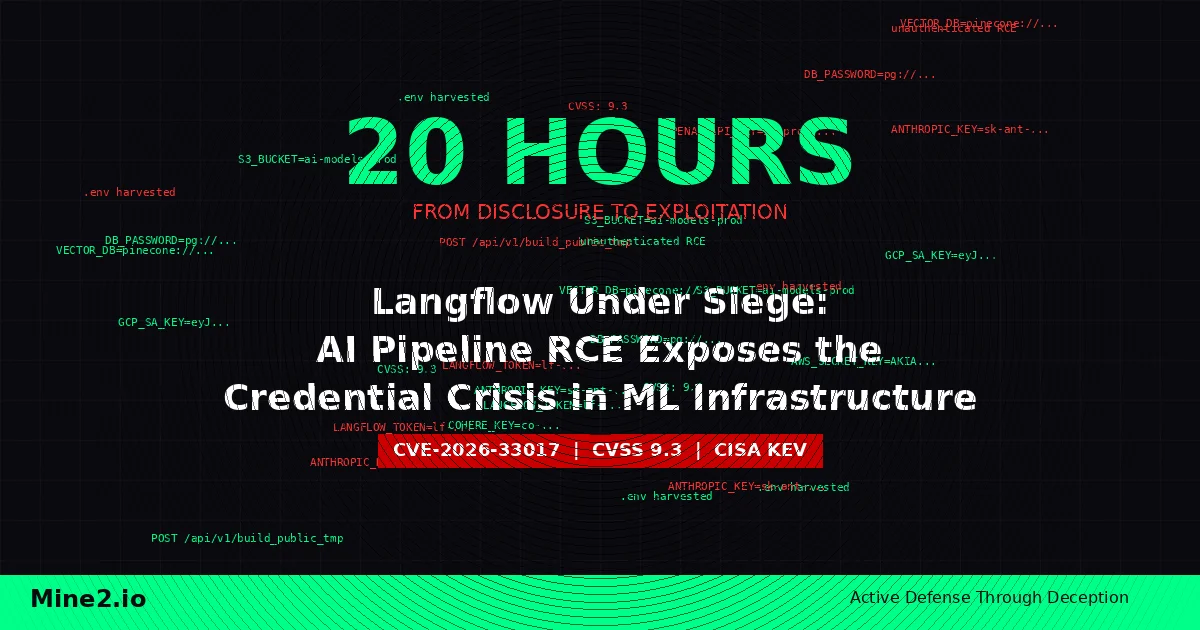

Twenty hours. That is all the time it took for threat actors to weaponize CVE-2026-33017 — a critical unauthenticated remote code execution vulnerability in Langflow, the popular open-source AI orchestration platform used by thousands of organizations to build and deploy LLM-powered workflows. No proof-of-concept code existed. Attackers reverse-engineered a working exploit directly from the advisory text, began scanning the internet for exposed instances, and within 24 hours were systematically harvesting .env files, database credentials, and API keys for OpenAI, Anthropic, and AWS from compromised pipelines.

The Langflow attack, documented by Sysdig's threat research team and flagged by CISA in its Known Exploited Vulnerabilities catalog on March 25, 2026, is not just another CVE. It is a case study in the unique credential exposure risk that AI and ML infrastructure introduces to the enterprise. Every Langflow instance, by design, stores a dense concentration of high-value secrets: LLM provider API keys, database connection strings, cloud IAM credentials, and vector store tokens. Compromise one node, and you inherit lateral access to cloud accounts, data stores, and downstream AI services. Traditional vulnerability management — patch, scan, repeat — failed spectacularly here. The window between disclosure and exploitation was measured in hours, not weeks.

The Anatomy of a 20-Hour Kill Chain

The vulnerability itself is almost absurdly simple. Langflow's /api/v1/build_public_tmp/{flow_id}/flow endpoint allows building public flows without authentication. When an attacker supplies a crafted data parameter, the endpoint uses attacker-controlled flow data — containing arbitrary Python code in node definitions — instead of the stored flow from the database. That code is passed directly to exec() with zero sandboxing. One HTTP POST request. No session tokens, no CSRF protections, no multi-step chain. Just unauthenticated remote code execution with a CVSS score of 9.3.

Sysdig's cloud threat intelligence team observed the attack progression in real time. Scanning for vulnerable Langflow instances began approximately 20 hours after the March 17 advisory. Exploitation using Python scripts followed within 21 hours. By the 24-hour mark, attackers had shifted from initial access to data harvesting, targeting .env files and .db databases that contain the credentials Langflow needs to operate.

What makes this attack chain particularly dangerous is what those credentials unlock. A single compromised Langflow instance can yield API keys for multiple LLM providers (OpenAI, Anthropic, Cohere), AWS access keys and secret keys, database connection strings for PostgreSQL and vector stores, and webhook URLs for downstream automation. These credentials are not low-value targets. LLM API keys are resold on criminal marketplaces, used to generate synthetic content at the victim's expense, or repurposed for supply-chain attacks against the operator's downstream AI services. AWS credentials provide direct lateral movement into cloud infrastructure. JFrog's security research team later confirmed that even the "fixed" version 1.9.0 remained partially exploitable under certain configurations, extending the exposure window further.

Why AI Infrastructure Is the New Credential Goldmine

The Langflow incident exposes a structural problem that extends far beyond a single CVE. Modern AI and ML pipelines are, by their very architecture, credential aggregation points. A typical production Langflow, LangChain, or similar orchestration deployment connects to five to fifteen external services, each requiring stored credentials. Unlike a traditional web application that might hold a single database password, an AI pipeline is a Swiss bank vault of API keys, cloud tokens, and service accounts.

This concentration of secrets creates a blast radius that security teams routinely underestimate. When Gartner published its 2026 Emerging Risks Monitor in February, AI infrastructure compromise ranked among the top five technology risks for the second consecutive quarter. The reason is straightforward: organizations are deploying AI tools faster than they are securing the credentials those tools require. Shadow AI deployments — Langflow instances spun up by data science teams without security review — are especially vulnerable. They often run with overprivileged cloud credentials, lack network segmentation, and are exposed directly to the internet.

The 50% surge in initial access broker advertisements reported by CrowdStrike's 2026 Global Threat Report compounds the problem. Stolen credentials from AI infrastructure are high-margin inventory for access brokers because they provide authenticated entry into multiple downstream systems from a single compromise.

Why Traditional Defenses Fall Short

The Langflow CVE-2026-33017 timeline exposes the fundamental timing gap in conventional security approaches. Consider the defense-in-depth stack that most organizations rely on.

Vulnerability scanning identifies the CVE — but by the time the next scheduled scan runs, exploitation is already underway. The 20-hour exploitation window is shorter than most organizations' patch management SLAs. Patching requires testing, change approval, and deployment across potentially dozens of Langflow instances, many of which security teams may not even know exist.

Secrets management tools like HashiCorp Vault can rotate credentials — but they cannot detect when a credential has already been exfiltrated and is being used by an attacker from an external IP. The stolen API key is, from the provider's perspective, indistinguishable from legitimate usage until billing anomalies surface days or weeks later.

Network monitoring and EDR can detect the initial exploitation attempt if signatures are available — but the exploit is a single legitimate-looking HTTP POST to a valid API endpoint. There is no malware binary, no suspicious process tree, no memory injection. The post-exploitation credential harvesting reads files from disk using standard Python, exactly as Langflow itself does during normal operation.

SIEM correlation can eventually flag anomalous API key usage patterns — but the alert fires after the attacker has already used the stolen credentials to access cloud resources, exfiltrate data, or pivot deeper into the environment. Detection at this stage is incident response, not prevention.

The core gap is clear: no tool in the traditional stack detects the moment a stolen credential is first used by an unauthorized actor, which is the single most valuable detection point in the entire kill chain.

How Deception Technology and Mine2 Close the Gap

This is precisely the scenario that cyber deception technology was built for. Mine2's approach plants fake credentials — Credential Mines — directly into the environment that attackers are targeting. When the attacker harvests credentials from a compromised Langflow instance, they collect real keys alongside planted mines. The moment they attempt to use a Credential Mine against any service, Mine2 generates a zero-false-positive alert with full attribution: source IP, timestamp, the specific mine that was triggered, and the action attempted.

Here is how the Mine2 platform maps to each stage of the Langflow attack chain.

Initial Access and Reconnaissance. MineField deploys decoy TCP services across the network that mimic common AI infrastructure ports — Langflow's default 7860, Jupyter notebook servers, MLflow tracking endpoints. When an attacker scans for exposed AI tools, MineField detects the port sweep before any real service is touched, generating an early-warning alert with the scanner's source IP and targeted ports.

Credential Harvesting. Credential Mines are planted as realistic-looking API keys, database connection strings, and cloud credentials in .env files, configuration directories, and environment variables across servers that host AI workloads. When an attacker dumps credentials from a compromised instance, the mines are indistinguishable from real secrets. Every mine is unique, so when it is used, Mine2 knows exactly which host was compromised and which credential file was exfiltrated.

Lateral Movement to Cloud. Cloud Mines create fake AWS IAM keys, S3 bucket references, and service account tokens that are seeded across the environment. When an attacker uses a stolen AWS key from a Langflow .env file and it happens to be a Cloud Mine, the alert fires immediately — before any real cloud resource is accessed. This is critical for detecting the cloud pivot that makes AI infrastructure compromises so dangerous.

Data Exfiltration and Persistence. AD Mines and Data Mines planted in directories adjacent to AI model artifacts and training data detect unauthorized access attempts. If an attacker tries to move beyond credential theft into intellectual property exfiltration — stealing proprietary models, training datasets, or fine-tuning configurations — the mines trigger on first touch.

The critical differentiator is timing. Traditional detection finds evidence of compromise after the attacker has used real credentials and caused real damage. Credential Mines fire the moment an attacker attempts to use any stolen credential, real or fake, because the fake ones are designed to be tried first — and no legitimate user or system ever has a reason to use them.

Compliance Implications: From Detection to Mandatory Reporting

The Langflow attack pattern — credential theft from AI infrastructure leading to cloud lateral movement — triggers mandatory breach notification requirements across multiple regulatory frameworks. Deception-based detection is uniquely positioned to satisfy these requirements because it provides irrefutable proof of unauthorized access with precise timestamps and attribution.

GDPR Articles 33 and 34 require notification to supervisory authorities within 72 hours of becoming aware of a personal data breach. When an AI pipeline processes personal data (which most enterprise LLM workflows do), credential theft from that pipeline constitutes potential unauthorized access to personal data. Mine2's zero-false-positive alerts provide the definitive "awareness" timestamp that regulators require, eliminating ambiguity about when the organization knew a breach had occurred.

India's Digital Personal Data Protection (DPDP) Act, 2023 mandates that data fiduciaries notify the Data Protection Board of India and affected data principals in the event of a personal data breach. AI pipelines processing Indian citizen data fall squarely within scope. Mine2's audit trail — showing exactly which credentials were exfiltrated, when they were used, and from where — satisfies the DPDP Act's requirement for breach notification with specific details about the nature of the breach.

PCI-DSS Requirement 11 mandates regular testing of security systems and processes, including intrusion detection. For organizations whose AI pipelines touch cardholder data environments (increasingly common with AI-driven fraud detection and payment processing), Mine2's deception layer satisfies Requirement 11.5's mandate for change-detection mechanisms and provides continuous monitoring that Requirement 11.4 requires.

RBI and SEBI Cybersecurity Directives for Indian financial institutions require comprehensive cyber incident reporting. The Reserve Bank of India's 2024 Master Direction on IT Governance and SEBI's Cybersecurity and Cyber Resilience Framework both mandate detection, reporting, and containment of unauthorized access. Mine2's immediate alerting on credential misuse satisfies the RBI's requirement for real-time or near-real-time detection capabilities.

CERT-In 6-Hour Reporting under the April 2022 directive requires organizations to report cyber incidents to CERT-In within six hours of noticing them. The Langflow CVE's 20-hour exploitation timeline means organizations need detection that fires in minutes, not days. Mine2's instant alerts when mines are triggered provide the fastest possible detection-to-reporting timeline, helping organizations meet CERT-In's aggressive reporting window.

HIPAA Security Rule requires covered entities to implement procedures to detect unauthorized access to electronic protected health information (ePHI). Healthcare organizations using AI pipelines for clinical decision support, medical imaging, or patient data analysis must detect credential theft from those systems. Mine2's Credential Mines satisfy HIPAA's technical safeguard requirements for audit controls (§164.312(b)) and access controls (§164.312(a)).

A Practical Playbook for Securing AI Infrastructure

The Langflow incident provides a clear blueprint for organizations deploying AI tools to strengthen their defenses before the next zero-day drops.

Step 1: Inventory every AI tool and pipeline. Conduct a comprehensive discovery of all Langflow, LangChain, MLflow, Jupyter, and similar deployments — including shadow IT instances deployed by data science teams without security review. You cannot defend what you do not know exists.

Step 2: Deploy MineField decoy services on AI-adjacent ports. Configure decoy listeners on ports commonly associated with AI tools (7860, 8888, 5000, 8080) across network segments where AI workloads operate. This provides immediate visibility into reconnaissance activity targeting your ML infrastructure.

Step 3: Plant Credential Mines in every .env and configuration file. Seed realistic fake API keys, database credentials, and cloud tokens alongside real credentials in configuration files across AI infrastructure. Mine2's single-click deployment makes this achievable across hundreds of instances without operational overhead.

Step 4: Deploy Cloud Mines for every cloud provider your AI stack uses. Create fake AWS IAM keys, Azure service principal credentials, and GCP service account tokens that mirror the credential types your AI pipelines legitimately use. These catch the cloud lateral movement that makes AI infrastructure compromises so damaging.

Step 5: Segment AI workloads and restrict outbound access. Langflow instances should not have unrestricted internet access. Limit outbound connections to required LLM provider endpoints and internal services. This limits the attacker's ability to exfiltrate credentials to external infrastructure.

Step 6: Implement credential rotation with deception integration. When Mine2 fires an alert indicating credential compromise, trigger automated rotation of all credentials stored on the affected host. This combines detection speed with containment speed, minimizing the window during which stolen real credentials remain valid.

Step 7: Establish a sub-six-hour incident response workflow. Build a runbook that maps Mine2 alerts to CERT-In's six-hour reporting requirement and GDPR's 72-hour notification window. Pre-draft notification templates with placeholders for Mine2's attribution data: source IP, timestamp, compromised host, and specific credential targeted.

The Window Is Closing

The Langflow CVE-2026-33017 attack demonstrated that the gap between vulnerability disclosure and active exploitation has collapsed to under a day for high-value targets. AI infrastructure, with its concentrated stores of API keys and cloud credentials, is among the highest-value targets in the modern enterprise. Traditional security tools operate on timelines measured in hours to days — scanning, patching, correlating — while attackers operate in minutes.

Deception technology inverts this equation. Instead of racing to patch before attackers exploit, organizations plant mines that detect attackers regardless of how they gained access — whether through a zero-day, a stolen credential, or an insider threat. The alert fires on first use. Zero false positives. Zero performance impact. Single-click deployment.

The organizations that will survive the AI infrastructure attack wave are those that stop trying to prevent every possible entry point and start ensuring that every stolen credential becomes a tripwire. Mine2's Credential Mines, Cloud Mines, and MineField transform your AI infrastructure from a credential goldmine into a detection grid that catches attackers the moment they try to use what they stole.

Ready to secure your AI pipelines with deception? Explore Mine2's platform →

mine2 team

The MINE2 team consists of cybersecurity experts, researchers, and engineers dedicated to advancing threat detection and cyber deception technologies.

Recent Articles

React2Shell CVE-2025-55182: 766 Next.js Hosts Breached in Automated Credential-Theft Wave

The AI Agent Credential Heist: How TeamPCP Weaponized LiteLLM to Loot Cloud Environments

FortiClient EMS Under Fire: CVE-2026-21643 Turns Endpoint Management Into an Attacker's Credential Goldmine

Need Security Help?

Protect your organization with MINE2's cyber deception platform.