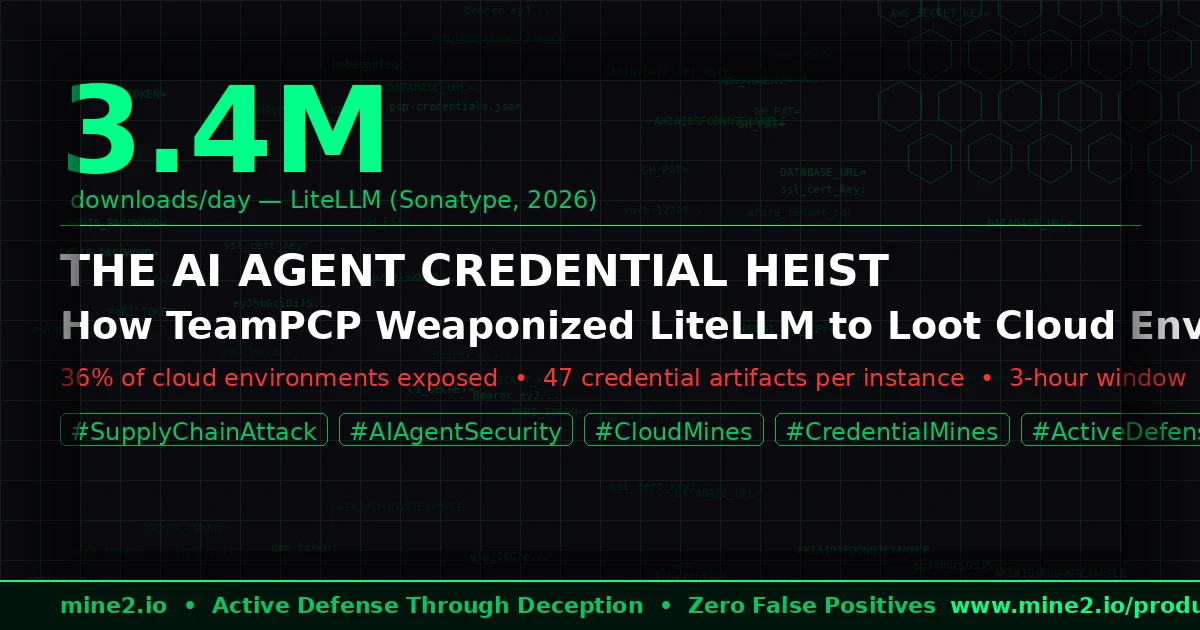

On March 24, 2026, at approximately 11:47 AM UTC, an attacker published two versions of the LiteLLM Python package — versions 1.82.7 and 1.82.8 — containing a fully-operational multi-stage credential stealer. For three hours before PyPI quarantined the package, those versions were available to every automated build pipeline, Docker container, and CI/CD workflow that ran pip install litellm. LiteLLM is downloaded 3.4 million times per day and is present in 36% of cloud environments globally (Sonatype, 2026). The math is not comforting.

This wasn't opportunistic. It was the third strike in a methodical five-day campaign by a threat group called TeamPCP, who first compromised Aqua Security's Trivy container scanner (March 19), then Checkmarx's AST GitHub Actions (March 21), and finally LiteLLM itself (March 24) — each time using credentials stolen in the prior compromise to pivot to the next, higher-value target. The credential chain that began with a single stolen PyPI token ended inside cloud environments running production AI workloads.

The stolen data catalog is not subtle: AWS IAM credentials, GCP service account keys, Azure tenant tokens, Kubernetes kubeconfig files, CI/CD pipeline secrets (GitHub Actions, GitLab CI), Docker registry credentials, database connection strings, OpenAI and Anthropic API keys, and cryptocurrency wallet keys. In one exfiltration payload reviewed by Sonatype researchers, a single compromised LiteLLM instance yielded 47 unique credential artifacts across six cloud providers.

The question is not whether your AI stack was exposed. The question is: when those credentials are used, will you know?

Why the AI Agent Stack Is the New Credential Goldmine

The security industry has spent years hardening the application layer — patching CVEs within SLAs, deploying EDR on every endpoint, layering MFA across user-facing systems. What it largely hasn't done is treat the AI agent infrastructure as a security boundary.

LiteLLM, Langflow, CrewAI, AutoGen — these are not toy frameworks. They are production middleware sitting between your applications and every major cloud API, database, and LLM provider. They are configured with real credentials. They run with real permissions. And they are frequently deployed with the same trust assumptions as internal tooling — which is to say, almost none.

The TeamPCP attack exploited this blind spot with mechanical precision. By poisoning Trivy — a widely trusted container security scanner embedded in thousands of CI/CD pipelines — they turned the security toolchain itself into a credential harvesting network. When LiteLLM's maintainers ran their own CI/CD build on March 24, Trivy executed its malicious payload, extracted the PyPI publishing token from the build environment, and uploaded it to an attacker-controlled relay within 90 seconds of job start (ReversingLabs, 2026).

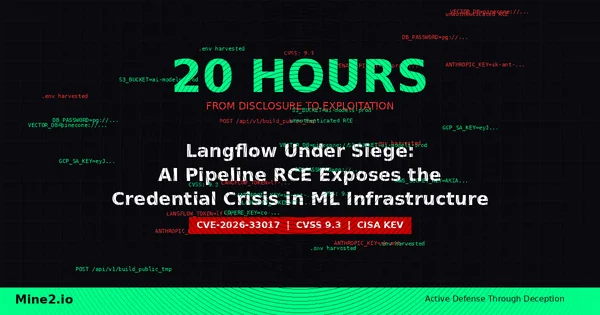

The broader context makes this more alarming. Just one week earlier, on March 17, 2026, CVE-2026-33017 was disclosed — an unauthenticated remote code execution vulnerability in Langflow, the visual AI pipeline builder. Sysdig's Threat Research Team recorded active exploitation within 20 hours of disclosure, with attackers specifically targeting the LLM API keys and cloud service credentials stored in Langflow's environment configuration. In one observed incident, a compromised Langflow instance yielded an OpenAI API key that was immediately used to generate $18,400 in inference charges before revocation, and an AWS access key that granted read access to 14 S3 buckets before the key was rotated (Sysdig, 2026).

Two separate threat actors, two separate attack chains, both targeting the same surface: the AI agent credential store.

Why Traditional Security Tools Miss the Post-Theft Lateral Movement

Here is the failure mode that matters most: the TeamPCP credential stealer was present in the PyPI ecosystem for three hours. Automated build systems don't wait. Dependency update bots — Dependabot, Renovate, custom scripts — often run on schedules measured in minutes, not hours. By the time PyPI quarantined the package, thousands of build environments had already executed the malicious code and exfiltrated their credential stores.

Your SIEM saw nothing unusual. The malicious code ran inside a legitimate build process, making outbound HTTPS requests to a domain registered 72 hours earlier — a pattern indistinguishable from normal package installation telemetry. Your EDR didn't fire. The credential stealer operated entirely in userspace, reading environment variables and config files through standard Python file I/O — no process injection, no kernel calls, no behavioral patterns that signature-based detection recognizes.

Your cloud provider's threat detection — AWS GuardDuty, Azure Defender for Cloud, GCP Security Command Center — does log API activity. But consider the detection latency problem: GuardDuty's anomaly detection for credential abuse typically requires 3–7 days of baseline behavior before it can distinguish legitimate API calls from an attacker using stolen long-term credentials that have never been used before. If an attacker exfiltrates an AWS key that your CI/CD pipeline generates fresh for each run and never reuses, GuardDuty has no behavioral baseline to compare against.

The rotation advice is correct but incomplete. Rotating credentials after a known compromise is necessary. It is not sufficient. In the 72 hours between the initial Trivy compromise (March 19) and the LiteLLM quarantine (March 24), attackers had five days with active, undetected access to credentials from every build pipeline that used both tools. Some of those credentials have not yet been identified as compromised, because the organizations don't know they were exposed.

Traditional security operates on known-bad. Supply chain attacks succeed by being unknown-bad — using legitimate credentials, through legitimate channels, to do things that look legitimate until they don't.

How Cloud Mines and Credential Mines Detect What Traditional Security Misses

Mine2's deception technology operates on a fundamentally different principle: if an attacker touches a mine, you know it — with zero false positives, regardless of whether the credential they used was "known-bad."

Cloud Mines: The AWS Tripwire Network

Cloud Mines are fake AWS resources — S3 buckets with enticing names, IAM roles with high-sounding permissions, Lambda functions, DynamoDB tables, EC2 instances — deployed alongside your real infrastructure and configured to look exactly like production assets. They have no legitimate use. No internal system calls them. No automation references them.

When a threat actor uses a stolen AWS IAM key — whether exfiltrated by TeamPCP's LiteLLM stealer or obtained through any other means — their first action is reconnaissance: ListBuckets, DescribeInstances, ListRoles. These enumeration API calls hit real resources and fake ones alike. The moment a Cloud Mine is accessed, Mine2 fires an alert with the full API call context, the IAM identity used, the source IP, and the exact timestamp.

This is not anomaly detection. There is no baseline required. There is no machine learning model that needs tuning. An alert from a Cloud Mine means, by definition, that someone is using credentials to probe infrastructure they should not be probing. The attacker using TeamPCP's stolen AWS keys will hit Cloud Mines during their first reconnaissance sweep — before they have exfiltrated a single byte of real data, before they have pivoted to any real resource.

The detection happens at the earliest possible point in the kill chain: the first post-theft API call.

Credential Mines: The Decoy Key Store

Credential Mines are fake credentials — API keys, bearer tokens, database connection strings, cloud access keys — deployed where real credentials live: in .env files, Kubernetes secrets, CI/CD environment variables, application configuration stores. They look exactly like real credentials. They are formatted correctly. They pass regex validation. They do not work.

In an AI agent environment, Credential Mines are deployed as fake OpenAI API keys, fake AWS keys in LiteLLM's configuration, fake Anthropic API tokens in Langflow's settings — sitting alongside real ones, indistinguishable to any automated exfiltration tool. When TeamPCP's credential stealer harvests the environment variables and configuration files of a compromised LiteLLM instance, it vacuums up real credentials and Credential Mines alike.

The moment an attacker attempts to use a Credential Mine — calling the OpenAI API, making an AWS API call, authenticating to a database — Mine2 records the attempt, the source, the target service, and the exact credential used. The attacker gets an authentication failure. You get a high-fidelity alert that tells you exactly which credential was stolen, which attacker IP attempted to use it, and which service they were targeting.

MineField: The Lateral Movement Sensor Network

After credential theft comes lateral movement. Attackers with valid cloud credentials don't stop at enumeration — they probe for open services, scan for internal endpoints, and attempt to reach adjacent systems. MineField deploys decoy TCP services across your network — fake SSH servers, fake database listeners, fake API endpoints — that exist solely to detect this scanning behavior.

In a cloud environment compromised via stolen LiteLLM credentials, an attacker attempting to reach internal services will inevitably hit MineField nodes. The detection is immediate, unauthenticated-connection-level, and generates actionable forensic data: the source IP, the service being probed, and the timing of each probe. Combined with Cloud Mine alerts from the same attacker IP, MineField provides corroborating evidence that establishes the full lateral movement timeline.

The Zero False Positive Guarantee

Every alert from Mine2's deception layer is a confirmed hostile action. No real user, no legitimate automation, and no misconfigured system will ever trigger a Cloud Mine, use a Credential Mine, or probe a MineField service. Security teams receive high-fidelity alerts that require action — not noise that requires triage. In the aftermath of a supply chain compromise like TeamPCP's LiteLLM attack, where the scope of credential theft may not be fully known for days, Mine2's deception layer provides continuous monitoring that catches attacker activity regardless of which specific credential they're using.

Compliance Requirements: Why Breach Detection Speed Is Now a Legal Obligation

The TeamPCP campaign isn't just a security incident. For any organization with exposed credentials that were used by the attacker, it's a notifiable breach under multiple regulatory frameworks — and the clock for reporting started the moment the attacker made their first API call with stolen credentials.

CERT-In 6-Hour Reporting: India's CERT-In cybersecurity directive requires organizations to report cybersecurity incidents — including unauthorized access to cloud systems — within 6 hours of detection. The operative word is detection. An organization whose cloud credentials were exfiltrated on March 19 but whose first Cloud Mine alert fires on March 26 has a 7-day detection gap it cannot explain to regulators. Mine2's real-time alerting provides the documented detection timestamp that starts the compliance clock accurately.

GDPR Articles 33 and 34: Under GDPR, a personal data breach must be reported to supervisory authorities within 72 hours of becoming aware of it (Article 33). If the breach involves high risk to individuals, affected parties must be notified without undue delay (Article 34). For organizations processing EU personal data through AI agent pipelines — a common pattern in SaaS applications — any credential theft that could result in unauthorized access to personal data triggers these obligations. Mine2's deception layer provides the audit trail that documents when awareness occurred, what was accessed, and what data was at risk.

India DPDP Act: The Digital Personal Data Protection Act requires Data Fiduciaries to notify the Data Protection Board of India upon breach of personal data. Cloud credential theft that exposes environments containing personal data is a qualifying breach. The Mine2 audit trail provides the incident timeline documentation required for regulatory submissions.

PCI-DSS Requirement 11: PCI-DSS v4.0 Requirement 11.5 mandates unauthorized access detection and alerting for cardholder data environments. Cloud Mines deployed in AWS environments handling payment data provide continuous, automated detection coverage that satisfies this requirement with documented, timestamped alerts.

RBI and SEBI Directives: The Reserve Bank of India's cybersecurity framework for banks and the Securities and Exchange Board of India's cybersecurity circular both require immediate incident reporting for cyber incidents involving unauthorized access. Financial institutions using AI agent frameworks in trading systems, fraud detection pipelines, or customer data processing have a specific obligation to detect and report credential-based access events — precisely the scenario TeamPCP's attack creates.

HIPAA Breach Notification Rule: Healthcare organizations running AI-assisted diagnostics, clinical decision support, or administrative automation on infrastructure that uses LiteLLM or similar frameworks face HIPAA breach notification obligations if protected health information was accessible via compromised credentials. Mine2's Cloud Mines provide the forensic evidence needed to determine whether PHI was accessed — critical for establishing whether a breach is notifiable or falls under the safe harbor provisions.

The Practitioner Playbook: Defending AI Agent Infrastructure with Deception

The following steps address both immediate response to the TeamPCP campaign and longer-term hardening of AI agent environments using a deception-first approach.

Step 1: Immediate Credential Audit (24 Hours) — Run a dependency audit across all Python environments to identify whether LiteLLM versions 1.82.7 or 1.82.8 were installed between March 24 and March 27, 2026. Check build logs, Docker layer histories, and pip audit outputs. For any environment that executed these versions, treat all credentials accessible in that environment as compromised and rotate immediately.

Step 2: Deploy Credential Mines in AI Environment Configuration — Before deploying the next version of your AI agent stack, seed the environment with Mine2 Credential Mines — fake API keys formatted to pass validation checks for OpenAI, Anthropic, AWS, and other services configured in your LiteLLM or Langflow instances. These mines travel with any future exfiltration attempt and provide immediate notification when stolen credentials are used. Deployment is a single-click operation through Mine2's console and requires no changes to production application code.

Step 3: Activate Cloud Mines in All Cloud Environments — Deploy Cloud Mines as decoy resources in every cloud account that uses AI agent services. Focus on S3 buckets with names that match your production naming conventions, IAM roles with names like LiteLLMServiceRole-prod, and Lambda functions named for common AI agent patterns. These specifically attract attackers who have stolen AI-stack-adjacent credentials and are conducting cloud reconnaissance.

Step 4: Instrument MineField on Internal AI Service Ports — AI agent frameworks often run on predictable internal ports (LiteLLM defaults: 4000, Langflow defaults: 7860). Deploy MineField on these ports in adjacent subnets and on non-production network segments. An attacker performing lateral movement after cloud credential theft will scan for these services — and MineField will catch them before they reach production.

Step 5: Implement Non-Human Identity Hygiene — Work toward eliminating long-lived static API keys in AI agent configurations. Use IAM roles with instance profiles instead of access keys in AWS deployments. Use Workload Identity Federation in GCP. Implement Azure Managed Identities. Where static keys are unavoidable, deploy Mine2 Credential Mines alongside them — the mines are the detection layer for the keys that can't yet be rotated.

Step 6: Build a Deception-First Incident Response Playbook — Pre-configure automated response actions triggered by Mine2 alerts: a Cloud Mine alert triggers credential revocation for the IAM identity that accessed it; a Credential Mine alert triggers an investigation workflow in your SIEM; a MineField alert triggers network isolation of the source IP. Mine2 alerts provide the specificity needed to automate these responses without false positive risk.

The Signal That Cuts Through the Noise

The cybersecurity industry will respond to the TeamPCP LiteLLM attack with the standard prescription: patch faster, rotate credentials more frequently, implement software composition analysis, run dependency audits. All of this is correct. None of it is sufficient.

Supply chain attacks succeed because they weaponize trust. The LiteLLM package was trusted. The Trivy scanner was trusted. The CI/CD pipeline that ran both was trusted. Credentials exfiltrated through this trust chain are real credentials — and when an attacker uses them, they look like legitimate API calls from legitimate services.

The only detection that is definitionally immune to this legitimacy problem is deception detection. Cloud Mines don't care whether the IAM key is marked "known-bad" in any threat intelligence feed. Credential Mines don't require behavioral baselines. MineField doesn't need to distinguish legitimate from malicious scanning — because legitimate systems never scan it at all.

When TeamPCP's stolen credentials are used to probe your cloud environment, Mine2 will know. The only question is whether you've deployed the mines before the attacker shows up.

Protect your AI agent infrastructure with Mine2's deception layer — zero false positives, single-click deployment, zero performance impact. Deploy Mine2 in your environment today →

mine2 team

The MINE2 team consists of cybersecurity experts, researchers, and engineers dedicated to advancing threat detection and cyber deception technologies.

Recent Articles

React2Shell CVE-2025-55182: 766 Next.js Hosts Breached in Automated Credential-Theft Wave

Langflow Under Siege: AI Pipeline RCE Exploited in 20 Hours Exposes the Hidden Credential Crisis in ML Infrastructure

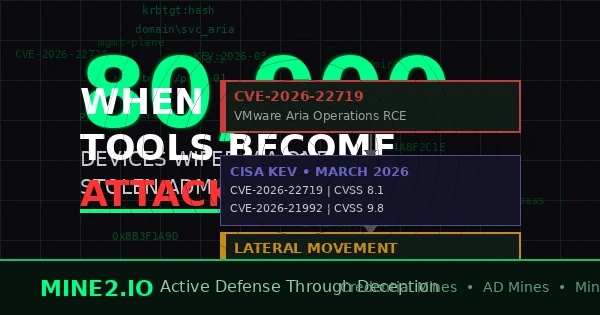

When Defenders' Tools Become Attack Vectors: The Management Platform Exploitation Crisis

Need Security Help?

Protect your organization with MINE2's cyber deception platform.